Francis Xiatian Zhang, PhD

I am a Research Associate at the University of Edinburgh working on geometry-aware computer vision systems for clinical video analysis, with a focus on bronchoscopy, surgical workflow modeling, and endoscopic video understanding. My work integrates explicit geometric structure (depth, graphs, topology) into deep learning pipelines to improve robustness, interpretability, and deployment readiness in safety-critical medical settings.

Prior to this, I received my MRes degree from King's College London, my MSc degree from the University of Southampton, and my Bachelor's degree from Beijing University of Chinese Medicine.

I am particularly interested in AI systems and ML engineering roles that build and deploy computer vision systems for real-world medical or other safety-critical environments.

Email / Academic Email / University Profile / GitHub / Google Scholar / Linkedin / Twitter

News

- 2/2026: Three papers at ICRA — ROOM: A Physics-Based Continuum Robot Simulator for Photorealistic Medical Datasets Generation, A Model-Based Framework for Assessing Operator Performance in Navigational Bronchoscopy (preprint soon), and Geometry-Aware Visual Odometry for Bronchoscopic Navigation via High-Gain Observer Fusion (preprint soon) — accepted.

- 1/2026: Understanding psychiatrist readiness for AI: a study of access, self-efficacy, trust, and design expectations (BMC Health Services Research) — accepted.

- 6/2025: Two papers at MICCAI 2025 — Navigational Bronchoscopy in Critical Care via End-to-End Pose Regression (accepted) and BREA-Depth: Bronchoscopy Realistic Airway-geometric Depth Estimation (early accepted).

- 5/2025: I officially received approval for my PhD thesis—finally a formal PhD now!

- 1/2025: I passed my PhD viva with minor corrections. Examiners: Dr. Hansung Kim and Dr. Stamos Katsigiannis.

- 12/2024: Adaptive Graph Learning from Spatial Information for Surgical Workflow Anticipation (IEEE Transactions on Medical Robotics and Bionics) — accepted.

- 11/2024: I began serving as a Research Associate at the University of Edinburgh (PI: Dr. Mohsen Khadem).

- 9/2024: Unraveling the brain dynamics of Depersonalization-Derealization Disorder: a dynamic functional network connectivity analysis (BMC Psychiatry) — accepted.

- 8/2024: Advancing healthcare practice and education via data sharing: demonstrating the utility of open data by training an artificial intelligence model to assess cardiopulmonary resuscitation skills (Advances in Health Sciences Education) — accepted. Dataset.

- 6/2024: Depth-Aware Endoscopic Video Inpainting (MICCAI 2024) — accepted.

- 12/2023: Pose-based Tremor Type and Level Analysis for Parkinson's Disease from Video (International Journal of Computer Assisted Radiology and Surgery) — accepted.

- 11/2023: I began working as a Research Assistant on the project Course Development in Edge Computing and Analytics 2.0 (PI: Dr. Anish Jindal).

- 8/2023: Correlation-Distance Graph Learning for Treatment Response Prediction from rs-fMRI (ICONIP 2023) — accepted.

- 4/2023: The Treatment of Depersonalization-Derealization Disorder: A Systematic Review (Journal of Trauma & Dissociation) — accepted.

Recent Projects

I design and build applied machine learning systems for safety-critical and data-constrained domains, with an emphasis on end-to-end pipelines: data curation, model training, evaluation, and deployment-ready inference. My work spans geometry-aware perception, temporal and graph-based modeling, and reproducible tooling that produces integration-friendly outputs (e.g., depth, graphs, structured predictions) for downstream systems. If you’d like to collaborate, please reach out at francis.xiatian.zhang@outlook.com (primary) or francis.zhang@ed.ac.uk (academic).

BREA-Depth

Bronchoscopy Realistic Airway-geometric Depth Estimation

Publishes ready-to-run inference code for bronchoscopic navigation research stacks, adapting foundation models to bronchoscopy footage so downstream perception modules inherit reliable depth.

- Produces stable depth estimates that downstream planners can use for CT-to-video alignment and collision checks.

- Pipeline: synthetic airway renderer → domain adaptation → depth inference package → evaluation harness, all versioned so surgical robotics teams can reproduce deployments.

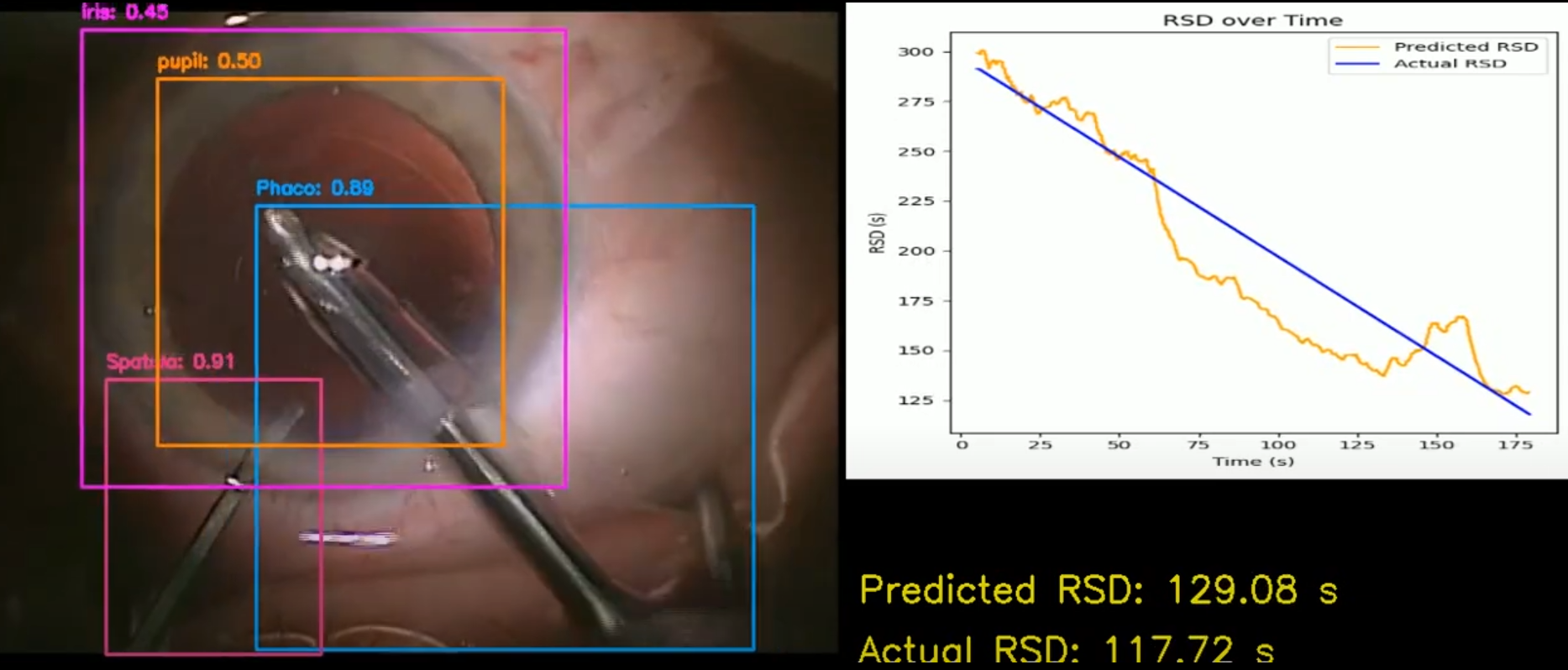

Adaptive Graph Learning

Adaptive Graph Learning from Spatial Information for Surgical Workflow Anticipation

Turns live tool detections into actionable workflow forecasts so scrub nurses and displays know what to prepare next.

- Continuously re-learns instrument relationships from spatial cues to surface impending steps minutes ahead.

- Prototype pipeline: detector feed → spatial graph builder → anticipation head → dashboard concept, with warning throttling heuristics and fallbacks when detections drop.

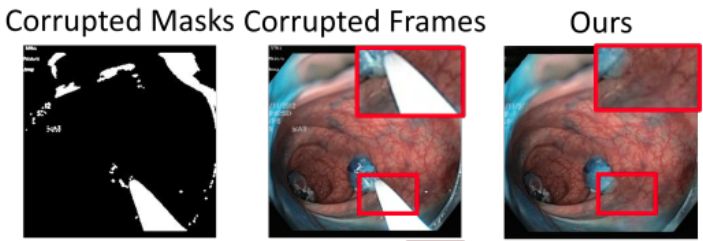

DAEVI

Depth-Aware Endoscopic Video Inpainting

Provides a depth-aware inpainting toolkit that teams can run locally to clean surgeon video archives prior to research sharing or dataset release.

- Accepts raw video plus masks and returns temporally consistent fills, ready for downstream training or review.

- Bundled training/inference scripts with deterministic preprocessing and checkpoints so biomedical researchers can rerun inpainting and compare outputs to their own reference sets.

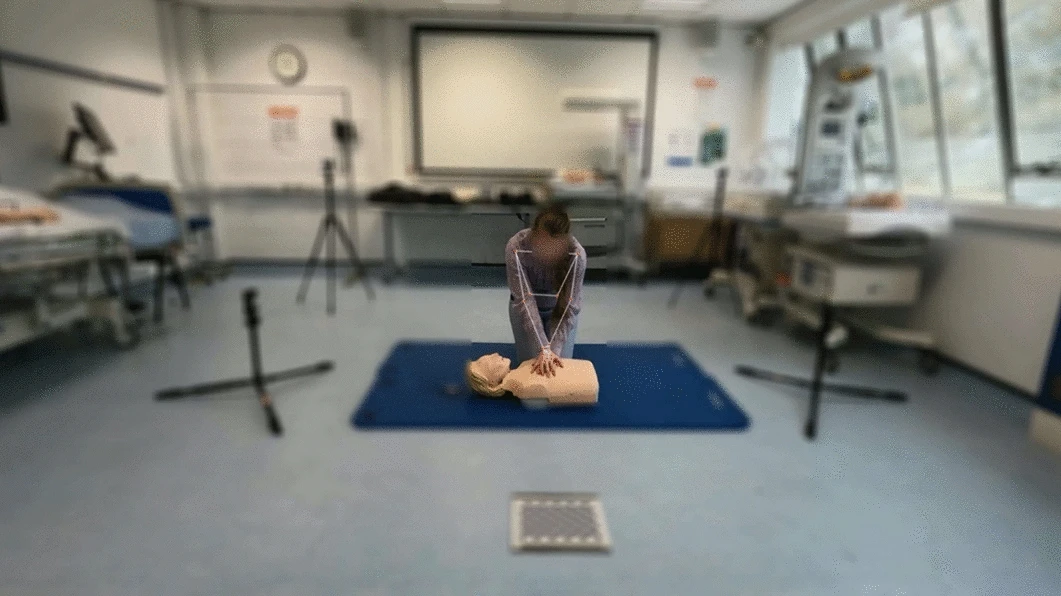

CPR Skill Assessment

AI Assessment of CPR Skills via Open Data

Uses multi-view pose estimation and graph models to score CPR technique from shared datasets for education and training.

- Built a multi-view pose pipeline and ST-GCN scoring model for skill rating.

- Published an open dataset and evaluation protocol to support reproducible benchmarking.

DPD Brain Dynamics

Dynamic Functional Connectivity for Depersonalization-Derealization Disorder (DPD)

Analyzes dynamic functional network connectivity to characterize depersonalization-derealization disorder brain dynamics.

- Built neuroimaging processing, clustering, and statistical analysis pipelines for dynamic connectivity.

- Quantified state transitions and connectivity patterns to support clinical interpretation.

Grants Involved

-

U-care: Deep Ultraviolet Light Therapies

Research Associate, EPSRC Grant: £6,132,366 (PI: Dr. Mohsen Khadem)

Funded by UKRI, 2021–2026 (I joined in 2024) -

Course Development in Edge Computing and Analytics 2.0

Research Assistant, UK-Egypt Trans-National Education (TNE) Grant: £30,000 (PI: Dr. Anish Jindal)

Funded by the British Council, UK, 2022–2024 -

Pose Estimation for Health Professional Education: Development of an Objective Computerized Approach for Measuring and Assessing Technical Competencies in Nursing

Research Assistant, Northumbria University Application Seed Funding Scheme: £16,428 (PI: Dr. Merryn Constable)

Funded by Northumbria University, UK, 2022

Research

My previous research can be primarily categorized into three parts: Computer-assisted Intervention, Clinical Outcome Analysis, and Evidence-based Medicine. Below are some of my selected publications. A complete list of my publications can be found on my Google Scholar page.

Conference Papers:

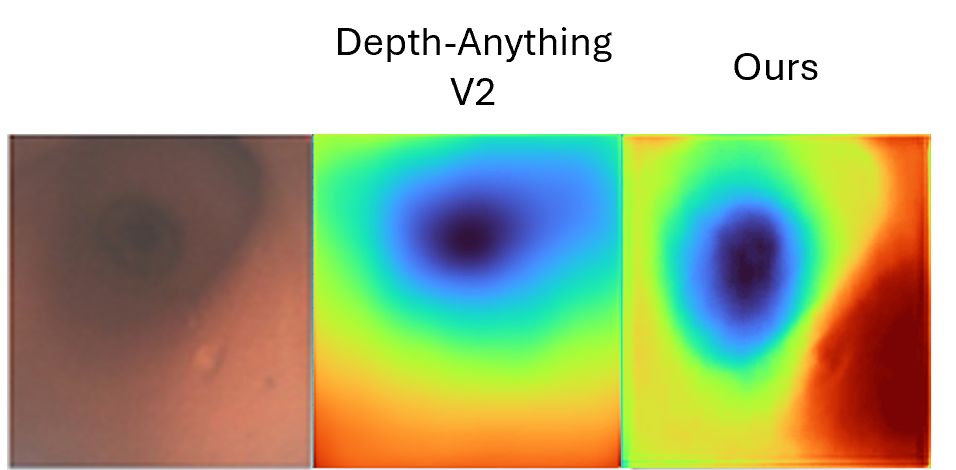

- BREA-Depth: Bronchoscopy Realistic Airway-geometric Depth Estimation

Francis Xiatian Zhang, Emile Mackute, Mohammadreza Kasaei, Kevin Dhaliwal, Robert Thomson and Mohsen Khadem

MICCAI 2025 | paper | arXiv | code

Contribution: Delivered an airway-aware depth estimation system that adapts foundation models via Depth-aware CycleGAN translation, airway structure losses, and Airway Depth Structure Evaluation metrics for bronchoscopy navigation. - Depth-Aware Endoscopic Video Inpainting

Francis Xiatian Zhang, Shuang Chen, Xianghua Xie and Hubert P. H. Shum

MICCAI 2024 | paper | arXiv | code

Contribution: Built a depth-aware inpainting system that pairs Spatial-Temporal Guided Depth Estimation with bi-modal fusion and a depth-enhanced discriminator to recover occluded anatomy. - Pose-Based Tremor Classification for Parkinson's Disease Diagnosis from Video

Haozheng Zhang, Edmond S. L. Ho, Francis Xiatian Zhang and Hubert P. H. Shum

MICCAI 2022 | paper | arXiv | code

Contribution: Led dataset curation and clinical alignment for a pose-based tremor analysis system, ensuring label validity, evaluation protocol design, and meaningful clinical interpretation.

Journal Papers:

- Adaptive Anticipation: Adaptive Graph Learning From Spatial Information for Surgical Workflow Anticipation

Francis Xiatian Zhang, Jingjing Deng, Robert Lieck and Hubert P. H. Shum

IEEE Transactions on Medical Robotics and Bionics 2024 | paper | arXiv | code

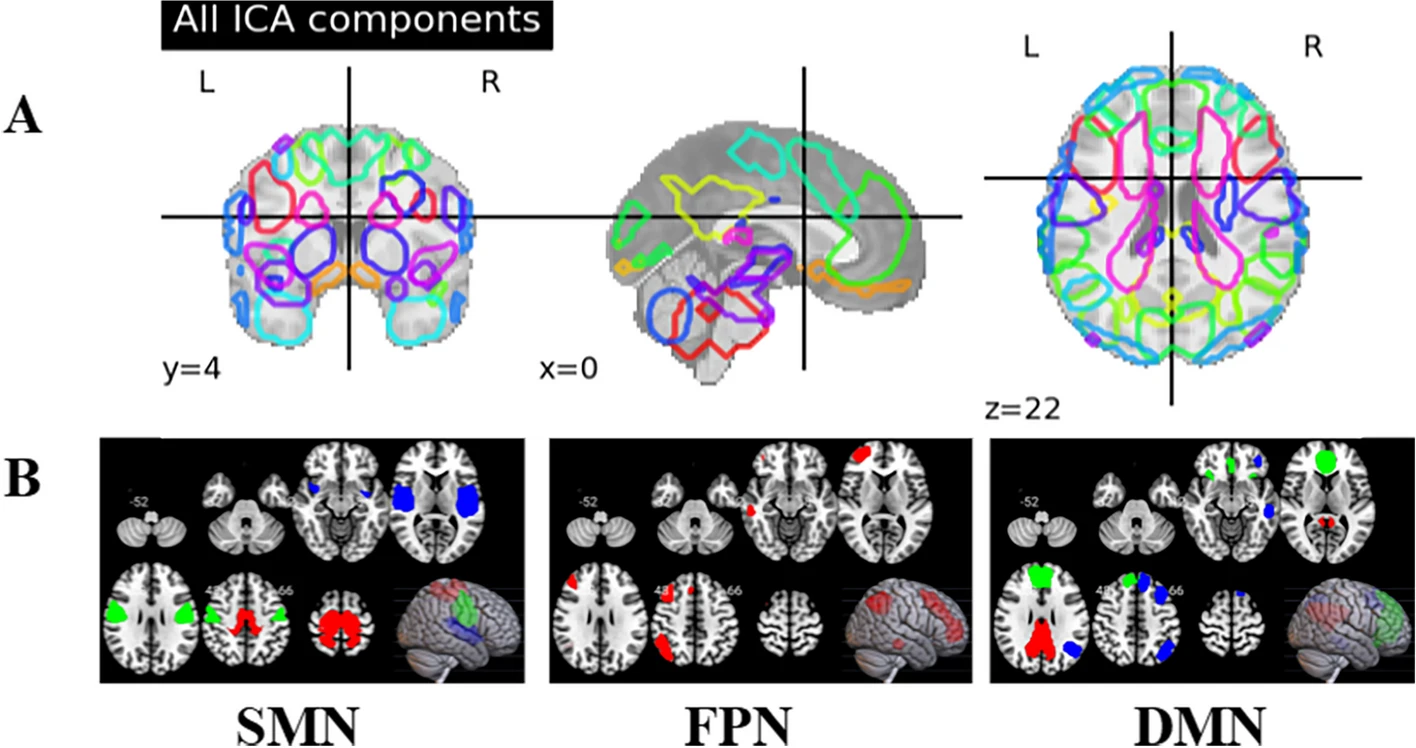

Contribution: Engineered an adaptive spatial graph learner that updates tool adjacencies on-the-fly to forecast surgical workflow transitions with interpretable attention. - Unraveling the brain dynamics of Depersonalization-Derealization Disorder: a dynamic functional network connectivity analysis

Sisi Zheng, Francis Xiatian Zhang*, Hubert P. H. Shum, Haozheng Zhang, Nan Song, Mingkang Song and Hongxiao Jia

BMC Psychiatry 2024 | paper

Contribution: Built neuroimaging processing, clustering, and statistical analysis pipelines to quantify brain dynamics. - Advancing healthcare practice and education via data sharing: demonstrating the utility of open data by training an artificial intelligence model to assess cardiopulmonary resuscitation skills

Merryn D. Constable, Francis Xiatian Zhang*, Tony Conner, Daniel Monk, Jason Rajsic, Claire Ford, Laura Jillian Park, Alan Platt, Debra Porteous, Lawrence Grierson and Hubert P. H. Shum

Advances in Health Sciences Education 2024 | paper | code | dataset

Contribution: Built a multi-view skill-rating ST-GCN pipeline with pose estimation to score CPR performance.

* = co-first author.

Experience

- Research Associate at The University of Edinburgh, 11/2024-.

Develops deep learning visual navigation prototypes for robotic bronchoscopy—airway segmentation, geometric graph construction, and failure-aware inference evaluated on recorded clinical video streams.

PI: Dr Mohsen Khadem - Research Assistant at Durham University, 10/2023-11/2023;7/2024-9/2024.

Delivered the Edge Computing and Analytics 2.0 course by teaching end-to-end model workflows—from training to ONNX export and deployment through Python APIs with lightweight GUIs.

PI: Dr Anish Jindal - Demonstrator at Durham University, 11/2021-6/2024.

Led weekly lab support for Computational Thinking, Data Science, Programming for Data Science, and Text Mining modules—debugging starter code, clarifying marking rubrics, and routing stubborn tooling issues to instructors. - Student Helper at Durham University, 08/2022-09/2022.

Handled visiting speaker transport, couch bookings, and leisure tours around Durham Castle for the 21st ACM SIGGRAPH/Eurographics Symposium on Computer Animation (SCA 2022). - Research Assistant at Northumbria University, 04/2022-07/2022.

Set up multi-camera capture, collected nursing simulation footage, and led training of the automatic rating system that turned pose-estimation outputs into competency scores.

PI: Dr Merryn Constable